(archived) Probability and statistics notes

Also using this as

Grade Distribution

Exam Score: 50%

Usual Performance: 50%

- Homework

- Midterm + Quizzes

- Non-standard assessments

- In-class performance

Features

-

Emphasis on comprehension and memorization

-

Requires strong calculus foundation

-

Fixed question types

Probability Section

Fundamentals of Probability Theory

Use

The probability of event

Law of Total Probability: Let

Bayes' Theorem: Let

i.e., Bayes' Theorem is a combination of the multiplication rule and the law of total probability.

If

Binomial distribution probability:

Multinomial distribution: Suppose a random experiment has

possible outcomes , with probabilities . In independent trials, let the random variables denote the number of occurrences of , respectively. The probability that occurs times, occurs times, ..., occurs times is

, where and .

Random Variables and Their Distributions (Memorize)

A random variable

The distribution function

The density function

When

Probability problems can be transformed into integrals of the probability density function:

Non-continuous Distributions

Geometric and hypergeometric distributions are omitted.

Binomial distribution:

The binomial distribution is unimodal. Since

, the maximum value is attained when is closest to .

Poisson distribution:

The Poisson distribution corresponds to the normalization of the Taylor expansion terms of

. Poisson's theorem: The binomial distribution converges to the Poisson distribution. Specifically, for

, when is sufficiently large (≥100), it can be approximated as , where .

Continuous Distributions

Uniform Distribution

Exponential Distribution

Gamma Distribution

Methods for Finding the Distribution of a Function of a Random Variable Y = f(X)

Taking the probability density function

- Determine the range of values corresponding to

. - Express

in terms of , and replace with . - Differentiate

to obtain .

For problems requiring case-by-case discussion:

- Determine the range of values corresponding to

and identify the breakpoints. - Within each interval, solve the inequality to obtain

, and compute . - Differentiate

to obtain , and summarize the results.

Note: When integrating

Multidimensional (Two-Dimensional) Random Variables and Their Distributions

Two-Dimensional Discrete Random Variable Distribution

It is essentially a table where the sum of all entries is 1. To find probabilities, simply add the probabilities at the corresponding positions.

Marginal Distributions:

Conditional Distribution Laws:

Two-Dimensional Continuous Random Variable Distribution

Equivalent to evaluating double integrals, with attention to the domain of integration.

Often, the property that the integral over the domain sums to 1 is used to find parameters, and then the double integral over a specified region is evaluated to compute probabilities.

Two-dimensional distribution function and density function:

Marginal densities:

Conditional distribution functions:

-

(the other half is omitted for brevity) -

Common question types:

One density, two conditionals, two marginals

- Given one density, find the remaining four.

- Given one conditional and its corresponding marginal, find the remaining three.

Given the two-dimensional density function

and , find the density function of :

- Determine the effective region

of . - Compute

, paying attention to case analysis. - Differentiate with respect to

to obtain the density function .

Farewell to convolutionIf

and are independent, then . If

and are independent, then .

Mathematical Expectation

Definition of Mathematical Expectation

Expectation for discrete variables:

Expectation for continuous variables:

The mathematical expectation exists when the sum of the series (or the integral) is absolutely convergent.

For the two-dimensional case, it can also be calculated as:

Expectation of a Function of a Random Variable

Properties of Mathematical Expectation

If

Variance

Definition of Variance

Let

,

The standard deviation is

Properties of Variance

When

When

Coefficient of variation:

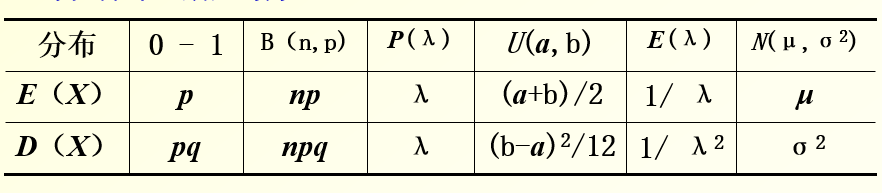

Common Distributions: Expectations and Variances (Memorize)

Raw Moments and Central Moments

Therefore, variance is the second-order central moment.

Covariance and Correlation Coefficient

Definition of Covariance

The proof is similar to that of variance and is omitted here.

Properties of Covariance

If

Proof: This follows directly from the properties of expectation.

Consequently, if

and are independent,

Standardization of Random Variables

It has an expectation of 0, a variance of 1, and is dimensionless.

Definition of Correlation Coefficient (Memorize)

The correlation coefficient

Obviously,

To compute the correlation coefficient, five expectations are required:

That is,

Properties of the Correlation Coefficient

When

When

To prove that

Normal Distribution

Standard Normal Distribution

Even function, bell-shaped curve.

These two properties are often used in exams when calculating probabilities, andshould be retained instead of in the answer (or when looking up values in a table).

Normal Distribution

Since

Differentiating yields

determines the horizontal shift of the graph (expectation), and determines the "peakedness" of the graph (standard deviation).

A linear combination of multiple independent normal distributions is still a normal distribution.

In particular, if they are all

, their average is .

Bivariate Normal Distribution

Special Case:

General Case (Including Correlation Coefficient):

For the bivariate normal distribution, its marginal and conditional distributions are normal. Additionally, being uncorrelated is equivalent to being independent.

Natural Exponential Family

Among common distributions, all except the uniform distribution can be expressed in this form.

Its mean parameter (expectation

Limit Theorems

Chebyshev's Inequality

Law of Large Numbers

Consider a sequence of random variables

If

-

Chebyshev's Law of Large Numbers:

A necessary and sufficient condition is that the variances of the random variables are uniformly bounded, i.e., there exists a constant

such that , which ensures compliance with the Law of Large Numbers. -

Law of Large Numbers for Independent and Identically Distributed (i.i.d.) Variables:

As long as the

are independent and identically distributed with and , the Law of Large Numbers is guaranteed to hold. -

Bernoulli's Law of Large Numbers:

If

, then .

Central Limit Theorem

Let

It follows that

Suppose

, and let

That is,

approximately follows

Statistics Section

Common Distributions

Chi-Square Distribution — Sum of Squares of Normals

The sum of squares of normally distributed variables

The chi-square distribution satisfies additivity.

t-Distribution — Normal Divided by a Number

The distribution is similar to the normal distribution but has thicker tails.

F-Distribution — Ratio of Normal Sums of Squares

Common Statistical Measures

Sample Mean

Sample Variance

Sampling Distribution Theorem

Case 1 (Known Variance)

Let the sample

and

Part 2 (Known Mean)

The sample

Third (Multiple Populations)

Probability and Statistics has successfully turned into a liberal art

If

where

If

Point Estimation

Method of Moments Estimation

Set the sample mean equal to the expectation function (which can also be the squared expectation), express the parameter

Maximum Likelihood Estimation

Discrete Case: Write the probability of the observed event as a function of the parameter

Continuous Case: The function to be considered is

The maximum likelihood estimate is the maximum value of

Criteria for Estimator Evaluation

Unbiased Estimator: An estimator is unbiased if the expected value of the estimate equals the true parameter, i.e.,

Asymptotically Unbiased Estimator: An estimator is asymptotically unbiased if

Efficiency Criterion: If

Consistency Criterion: If

Mean Squared Error (MSE) Criterion: If

Specifically,

is more efficient than in terms of MSE.

Interval Estimation (Memorize)

Only for normal distribution

Two-Sided Confidence Interval

Estimate the

If

If

Estimate the

One-Sided Confidence

Estimate the

If

If

Estimate the

The one-sided lower confidence limit is

Hypothesis Testing

Process

Proof by contradiction with a probabilistic nature

- First, state the null hypothesis

and the alternative hypothesis . - Under the assumption that

holds, construct the distribution satisfied by the sample. - Determine the rejection region

based on the value of . - Substitute the observed value

. If , reject the null hypothesis.

Type I and Type II Errors and Their Probabilities

- Type I Error (False Positive): When

is true, the sample value . - Type II Error (False Negative): When

is true, the sample value .

The probability of a Type I error,

The probability of a Type II error is